Web Menu

Product Search

Exit Menu

How to Calibrate a Scale with a Nickel – Full Guide

Content

- 1 The Short Answer: Yes, a Nickel Can Calibrate Your Scale

- 2 Why the Nickel Is a Reliable Calibration Reference

- 3 Step-by-Step: How to Calibrate a Small Digital Scale with a Nickel

- 4 Common Calibration Problems and How to Fix Them

- 5 Nickel Calibration vs. Certified Weight Sets: Which Should You Use?

- 6 How Truck Scale Calibration Works — A Completely Different Process

- 7 Signs Your Truck Scale Needs Calibration

- 8 Truck Scale Calibration Frequency and Regulatory Requirements

- 9 Maintaining Scale Accuracy Between Calibration Events

- 10 The Legal and Financial Stakes of Inaccurate Scale Calibration

- 11 Choosing the Right Scale for Your Application

- 12 Quick Reference: Calibration Checklist for Small Scales and Truck Scales

The Short Answer: Yes, a Nickel Can Calibrate Your Scale

A U.S. nickel weighs exactly 5.000 grams, making it one of the most reliable everyday objects for calibrating small digital scales. If your scale reads 5.00g when you place a single nickel on it, the calibration is accurate. Stack two nickels and you get 10.00g; four nickels equal 20.00g. This method works well for pocket scales, kitchen scales, and jewelry scales — any device with a maximum capacity under a few hundred grams.

However, this approach has clear limits. For industrial weighing equipment — particularly a truck scale, also called a weighbridge — nickels are completely impractical. A truck scale routinely measures loads between 20,000 and 80,000 pounds. Calibrating such a system requires certified test weights, legal-for-trade documentation, and in many jurisdictions, a licensed inspector. Understanding where the nickel method applies, and where it absolutely does not, is the foundation of smart scale calibration practice.

Why the Nickel Is a Reliable Calibration Reference

The U.S. Mint has maintained a strict specification for the nickel since 1866. Each coin must weigh 5.000 grams with a tolerance of ±0.194 grams, though in practice most circulating nickels fall within a much tighter range. The coin is composed of 75% copper and 25% nickel, a composition that resists significant oxidation or mass loss over time under normal conditions.

Compared to other common coins, the nickel stands out because its mass is a round number in the metric system. Quarters weigh 5.670g, dimes weigh 2.268g, and pennies weigh 2.500g — none of these produce the clean 5g or 10g reference values that calibration benefits from. The nickel's metric-friendly weight is why it became the go-to coin for hobbyists, jewelers, and small-business owners who need a quick calibration check.

That said, a heavily worn nickel can lose measurable mass. Coins that have been in circulation for decades may weigh as little as 4.8g. For critical applications, always use newer coins or — better still — invest in a certified calibration weight set, which you can purchase for under $20 online.

Step-by-Step: How to Calibrate a Small Digital Scale with a Nickel

Follow this process carefully. Rushing through calibration defeats the purpose and can cause ongoing measurement errors.

Step 1 — Choose a Stable Surface

Place the scale on a flat, hard surface away from vibration sources such as HVAC vents, washing machines, or foot traffic areas. Even minor vibrations cause readout fluctuations. A granite countertop or solid wood table works well. Avoid placing the scale near open windows where air currents can affect the load cell reading.

Step 2 — Power On and Allow Warm-Up Time

Turn the scale on and let it sit for at least 3 to 5 minutes before calibrating. Load cells are sensitive to temperature changes, and a scale that has been stored in a cold car or warm cabinet will drift during its first few minutes of operation. Waiting allows the internal electronics to reach thermal equilibrium.

Step 3 — Zero the Scale

Remove everything from the weighing platform. Press the "Tare" or "Zero" button. The display should read exactly 0.00g. If it does not return to zero on its own, check whether the platform is touching a wall or object on the side, which can cause mechanical bias.

Step 4 — Enter Calibration Mode

Most digital scales have a dedicated calibration mode accessed by holding a specific button (often labeled "CAL" or "MODE") for 3 to 5 seconds. The display will typically show "CAL" followed by the target weight it expects. Consult your scale's manual if you are unsure which button to hold — some brands require pressing two buttons simultaneously.

Step 5 — Place the Nickel on the Platform

When the display shows "CAL 5" or a similar prompt, place one U.S. nickel on the center of the platform. Centering matters — off-center placement can produce a reading that differs from the true weight by 0.1g to 0.3g on low-quality scales due to uneven load cell distribution.

Step 6 — Confirm and Verify

The scale will process the input and return to normal weighing mode. Remove the nickel, re-zero, then place it back. The reading should now display 5.00g. Test with two nickels (10.00g) and four nickels (20.00g) to verify linearity across the range. If readings drift significantly at higher weights, your scale may need servicing or replacement.

Common Calibration Problems and How to Fix Them

Even after following the steps above, some scales behave unpredictably. Here are the most frequent issues and their practical solutions.

The Scale Won't Hold Zero

If the display drifts between -0.1g and +0.1g with nothing on the platform, the most likely culprits are vibration, air movement, or a dying battery. Replace the batteries first — low voltage is a common and overlooked cause of erratic readings. If drift continues, relocate the scale and shield it from air currents.

Calibration Mode Won't Activate

Some entry-level scales, particularly those sold without a user manual, have calibration locked behind a factory reset procedure. Try holding the power button for 10 seconds until the display resets. Others require a specific sequence of button presses that varies by brand. Searching the model number alongside "calibration procedure" online usually surfaces the correct steps.

The Reading Is Consistently Off by a Fixed Amount

If your nickel consistently reads 4.7g or 5.3g despite multiple calibration attempts, the scale's internal adjustment range may be exhausted, or the load cell has drifted beyond what software correction can fix. At this point, replacing the scale is more economical than repairing it, especially for units priced under $50.

Readings Vary Based on Where the Object Is Placed

Placing the same nickel in the corner versus the center of the platform produces different readings on some scales. This indicates a problem with the load cell configuration. High-quality scales use multiple load cells or a single precision cell with corner correction. Budget scales often use a single cell with no corner correction, making consistent centering of items mandatory.

Nickel Calibration vs. Certified Weight Sets: Which Should You Use?

The nickel method is convenient but not authoritative. Here is a comparison to help you decide which approach suits your situation.

| Method | Cost | Accuracy | Legal for Trade? | Best For |

|---|---|---|---|---|

| U.S. Nickel | $0.05 | ±0.194g (tolerance) | No | Quick personal checks |

| Class M1 Weight Set | $15–$40 | ±0.005g | Conditional | Small business, lab work |

| NIST-Traceable Weights | $100–$500+ | ±0.001g or better | Yes | Regulated industries |

| Professional Calibration Service | $75–$300/visit | Traceable to NIST | Yes | Legal-for-trade, truck scales |

For personal use — weighing coffee, reloading ammunition, portioning food — the nickel method is perfectly adequate. For any commercial transaction where weight determines price, you need at minimum NIST-traceable weights and ideally a certified calibration service on a documented schedule.

How Truck Scale Calibration Works — A Completely Different Process

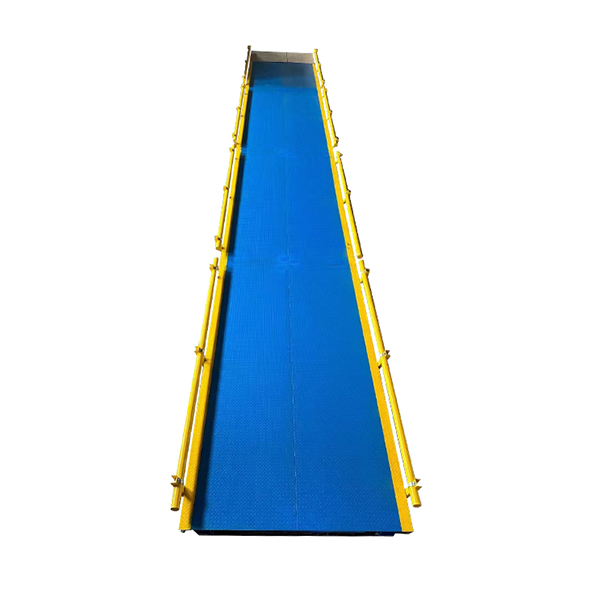

A truck scale — also referred to as a weighbridge or axle scale — operates on a fundamentally different scale of precision and regulation than a kitchen or pocket scale. While the nickel method is charming for small devices, calibrating a truck scale involves state inspectors, multi-ton certified test weights, and strict legal requirements.

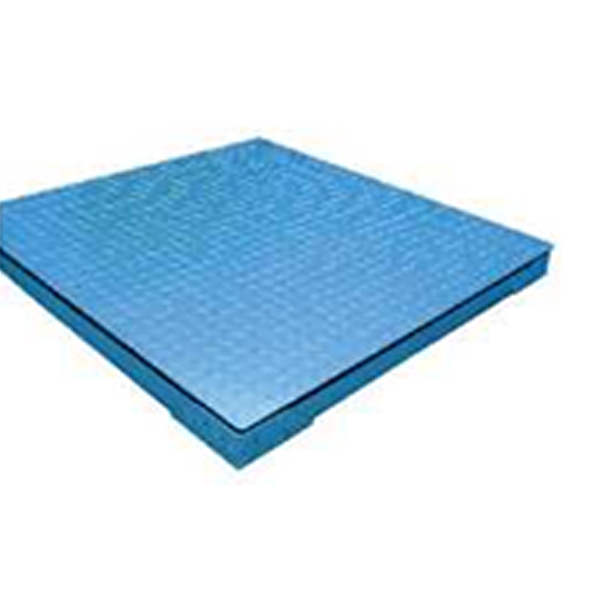

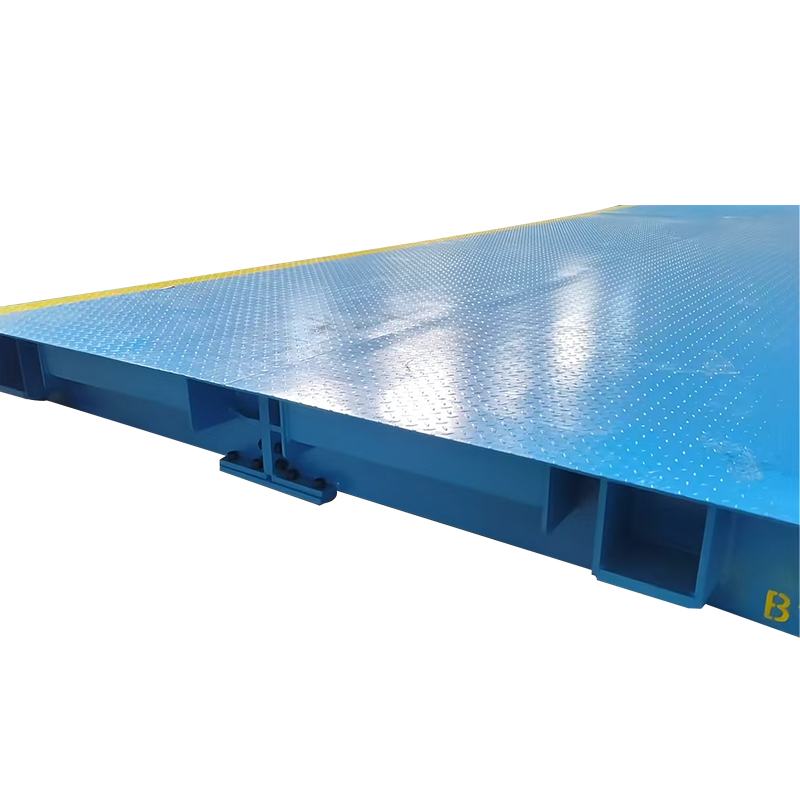

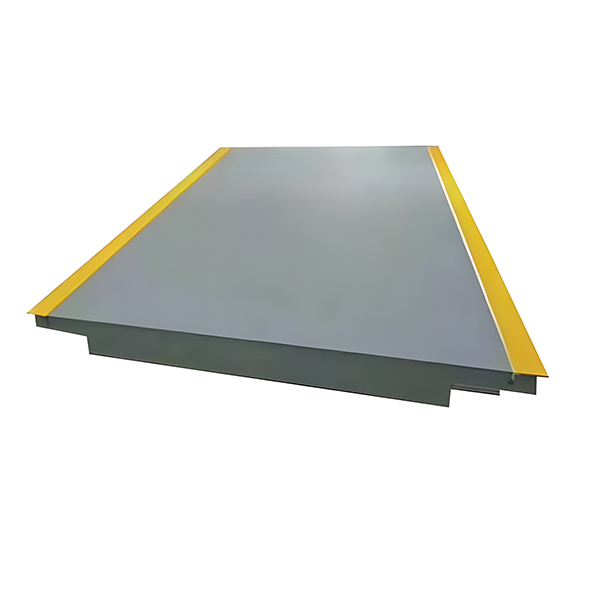

Most truck scales have a maximum capacity between 80,000 and 100,000 pounds (approximately 36,000 to 45,000 kg), corresponding to the federal gross vehicle weight limit for U.S. highways. The scale deck may be 10 to 70 feet long, embedded in concrete, and equipped with 6 to 12 load cells wired in parallel. Each load cell must be checked individually for output accuracy, and the entire system must be verified as a unit.

Who Performs Truck Scale Calibration?

In the United States, truck scale calibration is governed by the National Institute of Standards and Technology (NIST) Handbook 44, as well as individual state weights and measures laws. Most states require annual calibration by a licensed scale technician or a state inspector. Industries that rely heavily on truck scales — agriculture, quarrying, waste management, logistics — often schedule calibration every 6 months or after any significant mechanical event, such as a lightning strike or a high-impact vehicle collision with the scale deck.

The Certified Test Weight Method

The most common truck scale calibration method uses a test truck loaded with certified weights. The typical procedure involves a vehicle of known weight — often a tandem-axle truck loaded with certified cast iron or steel weights — driven across the scale in multiple positions. The technician records the scale's reading at each position and compares it to the certified weight of the vehicle. Adjustments are made through the scale's indicator (junction box or digital controller) until readings fall within the allowable tolerance, which under NIST Handbook 44 is ±0.1% of the applied load for most commercial applications.

The Substitution Method for Truck Scales

When certified test weights of sufficient mass are unavailable, technicians may use the substitution method: a vehicle of unknown but stable weight is weighed on a reference scale, then driven to the scale being calibrated. This method is less preferred because it introduces uncertainty from the reference scale's accuracy, but it is acceptable in some jurisdictions when properly documented.

Electronic Calibration and Load Cell Trimming

Modern truck scales use digital load cell systems that allow individual cell output to be trimmed electronically. The technician connects a laptop or handheld device to the junction box and views live millivolt output from each cell. Cells producing significantly different outputs under the same applied load indicate mechanical problems — debris under the scale, damaged load cells, or binding at the check rods. These physical issues must be resolved before electronic trimming, because software correction cannot compensate for structural problems.

Signs Your Truck Scale Needs Calibration

Truck scale operators often notice accuracy problems before a formal inspection. Watch for these warning signs:

- Vehicles that consistently weigh differently on your truck scale versus a nearby state weigh station by more than 500 pounds

- Readings that change depending on which part of the scale deck the axle is positioned on

- The indicator displaying an error code related to load cell imbalance

- Visible damage to the scale deck, check rods, or load cell mounting hardware

- A recent flood, freeze-thaw cycle, or earthquake that may have shifted the scale foundation

- Legal complaints or chargebacks from customers disputing load weights

Any of these situations warrants immediate inspection by a qualified technician rather than waiting for the annual calibration cycle.

Truck Scale Calibration Frequency and Regulatory Requirements

The required calibration frequency for a truck scale depends on jurisdiction, industry, and usage volume. The table below summarizes typical requirements across common sectors.

| Industry / Use | Minimum Frequency | Regulatory Body | Notes |

|---|---|---|---|

| Commercial grain trade | Annually | USDA / State W&M | Before each harvest season recommended |

| Aggregate / quarry | Annually or semi-annually | State W&M | High vibration environments accelerate drift |

| Solid waste / recycling | Annually | State W&M / EPA permits | Permit conditions may require more frequent checks |

| Freight / logistics | Annually | State W&M / FMCSA | High-volume sites often calibrate quarterly |

| Mining | Quarterly to annually | MSHA / State W&M | Corrosive environments shorten load cell lifespan |

Beyond regulatory minimums, high-volume operations benefit from more frequent calibration checks. A truck scale processing 200 or more transactions per day accumulates mechanical wear quickly, and even a 0.5% error at that volume can result in significant financial discrepancies over a quarter.

Maintaining Scale Accuracy Between Calibration Events

Calibration is a point-in-time event. What happens between calibrations determines how long that accuracy holds.

For Small Scales

- Store the scale in a stable temperature environment. Avoid leaving it in a vehicle overnight in winter — cold cycles stress the load cell and the solder joints on the circuit board.

- Clean the platform regularly. Even a thin film of dried liquid on the platform can add measurable mass or cause mechanical friction.

- Replace batteries proactively. Most digital scales show measurement drift in the last 20% of battery life, well before the low-battery indicator activates.

- Run a quick nickel check weekly if the scale is used daily for any purpose where accuracy matters.

For Truck Scales

- Keep the pit (the space below the scale deck) clean and dry. Standing water corrodes load cells and wiring within months.

- Inspect bumper bolts and check rods monthly. Loose hardware causes mechanical binding that shows up as erratic readings.

- Keep a calibration log. Document every service visit, adjustment, and anomalous reading. This log is often required for legal-for-trade certification and is invaluable when troubleshooting recurring problems.

- Avoid driving overloaded vehicles across the scale. Repeated overloading can permanently deform load cells, which are rated for a specific maximum safe load — typically 150% of the scale's rated capacity before permanent damage occurs.

- Use a reference vehicle of known weight on a weekly or monthly basis to spot-check accuracy between professional calibration visits.

The Legal and Financial Stakes of Inaccurate Scale Calibration

For small scales, poor calibration means inaccurate recipes or inconsistent product portions. For a truck scale, the stakes are substantially higher.

A truck scale reading 1% high on a 40,000-pound load overstates the weight by 400 pounds. In grain trading, where payment is per bushel and a bushel of corn weighs 56 pounds, that translates to roughly 7 bushels — worth approximately $28 at $4.00/bushel. Across 100 daily transactions, that single percentage point error costs a farmer or buyer $2,800 per day. Over a 90-day harvest season, that is $252,000 in mismeasured grain.

Beyond financial loss, operating an uncalibrated legal-for-trade scale is a regulatory offense. Fines vary by state but commonly range from $500 to $10,000 per violation, and repeat offenders can face suspension of their business license. In some states, weights and measures inspectors conduct unannounced audits, and a failed inspection can result in the scale being sealed and taken out of service until a licensed technician corrects and recertifies it.

For logistics companies, an out-of-tolerance truck scale can produce overweight citations if loads are released based on inaccurate scale readings, since federal law holds the shipper responsible for ensuring loads do not exceed legal weight limits regardless of what their scale reported.

Choosing the Right Scale for Your Application

Part of maintaining calibration accuracy is starting with equipment appropriate for the task. A scale designed for 200g cannot be pressed into service weighing 2kg loads and expected to remain accurate. The same principle applies at the industrial level.

Matching Capacity to Application

As a general rule, operate a scale within 10% to 90% of its rated capacity for best accuracy. Near the bottom of its range, a scale has poor resolution relative to the load. Near the top, the load cells are under high mechanical stress and wear faster. For a truck scale rated at 80,000 pounds, this means the optimal working range is roughly 8,000 to 72,000 pounds — which conveniently covers most legal truck configurations.

Platform vs. Axle Truck Scales

Full-platform truck scales weigh the entire vehicle in a single pass, providing the most accurate gross vehicle weight. Axle scales weigh one axle group at a time and sum the readings — useful for portable or temporary installations but generally less accurate than a full-length platform scale, particularly when the vehicle is still moving during the measurement. Axle scales require their own calibration procedure and have different tolerance requirements under NIST Handbook 44.

Digital vs. Analog Indicators

Modern truck scales almost universally use digital indicators with internal analog-to-digital converters. These systems offer advantages including data logging, connectivity to truck management software, and electronic calibration without manual span adjustments. However, they introduce their own failure modes — firmware bugs, USB communication errors, and display glitches that can be mistaken for calibration problems. Always rule out indicator-level issues before assuming a load cell problem.

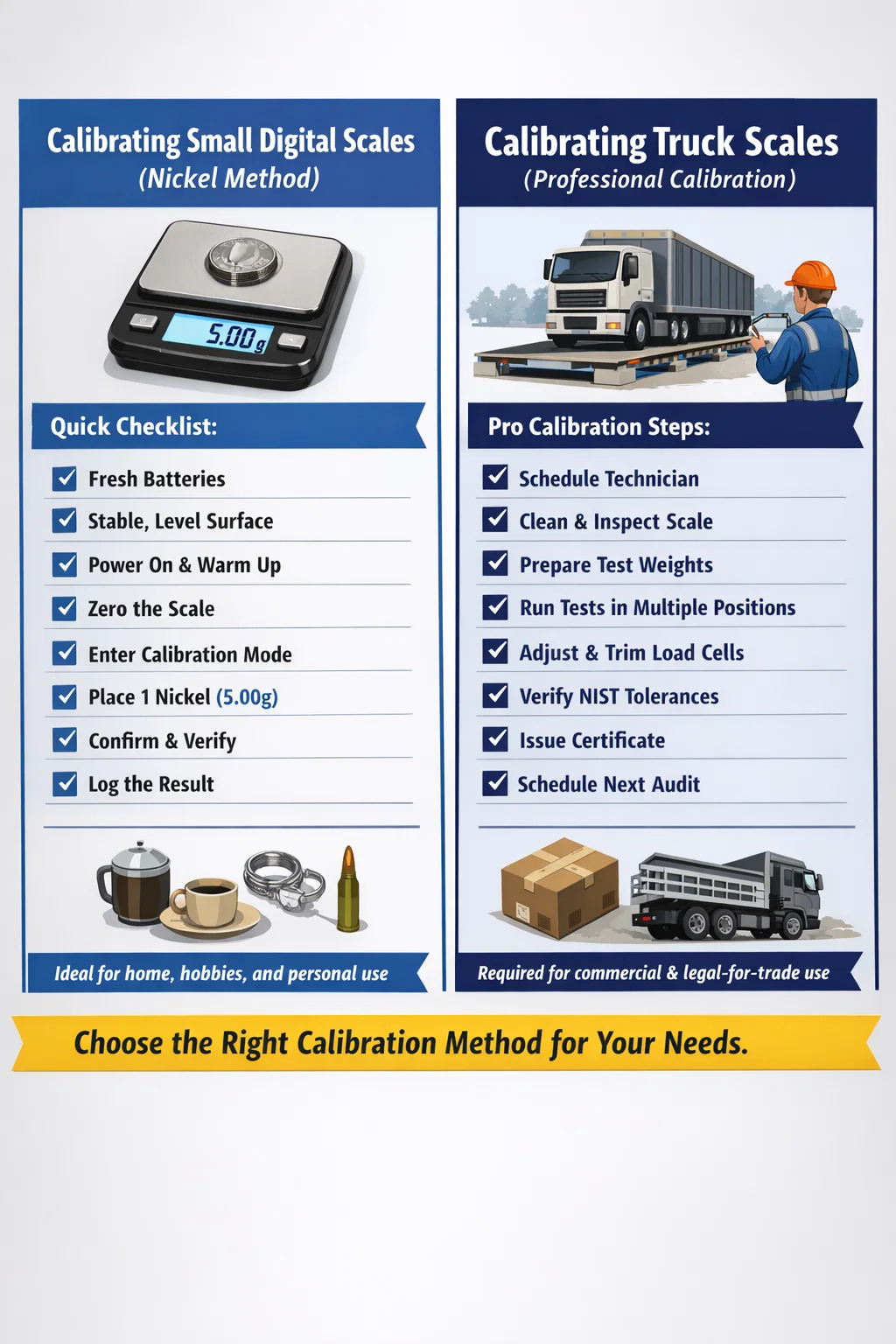

Quick Reference: Calibration Checklist for Small Scales and Truck Scales

Use the appropriate list for your scale type before and after calibration.

Small Digital Scale (Nickel Method)

- Verify batteries are fresh or fully charged

- Place on a flat, vibration-free surface

- Power on and wait 3–5 minutes

- Zero the scale with nothing on the platform

- Enter calibration mode per manufacturer instructions

- Place one or more U.S. nickels (5.00g each) centered on the platform

- Confirm the scale accepts the calibration weight

- Verify with multiple known weights across the scale's range

- Document the date and result

Truck Scale (Professional Calibration)

- Schedule a licensed scale technician or state inspector

- Clean the scale deck and pit before the visit

- Inspect check rods, bumper bolts, and load cell cables visually

- Confirm indicator firmware is current

- Prepare certified test weights or a certified test vehicle

- Run the test weight across multiple positions on the deck

- Adjust load cell trim or span as needed

- Verify all positions meet NIST Handbook 44 tolerances

- Obtain and file the calibration certificate

- Schedule the next calibration date and post it visibly at the scale

-

Add: Building 3, No. 355, Xiangshan East Road, Binhai Economic Development Zone, Cixi City, Ningbo, Zhejiang, China.

-

Tel: +86-18969402526

-

Phone: +86-0574-86817102

-

E-mail: [email protected]

English

English 中文简体

中文简体